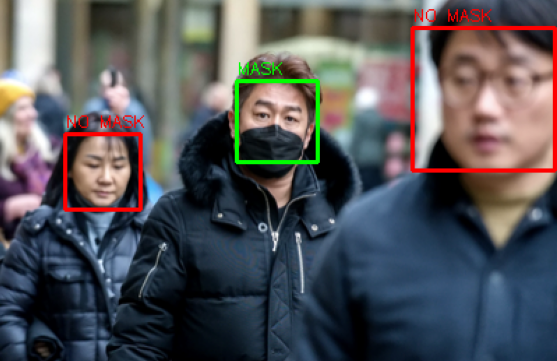

Facial Recognition & Mask Detection

The Context

This was built immediately after completing my MSc, during a job search in a world that had largely shut down. Two things made it feel like the right project for that moment: I had just worked with vision models during my programme, so the technical pieces were fresh; and mask-wearing was one of the defining public health realities of 2020, which made the problem feel genuinely relevant rather than academic.

It's a small, focused project — a two-stage pipeline that detects faces with OpenCV and classifies each one as masked or unmasked using a Keras CNN. But it sat at the intersection of a topic I care about deeply and a set of skills I was actively trying to consolidate, and it gave me my first real hands-on experience combining classical computer vision with a learned neural classifier into something that actually worked end-to-end.

Why Computer Vision

Vision is an area of AI I find genuinely compelling — not just as an engineering problem, but as a conceptual one. I've been interested in neurobiology since my undergraduate years, and the visual cortex in particular fascinates me: the way biological systems extract structure from raw photoreceptor signals through successive layers of abstraction is strikingly analogous to how convolutional neural networks process image data. That parallel isn't a coincidence — CNNs were partly inspired by that biology — and I find that connection meaningful.

Beyond the academic interest, I've been thinking for a long time about how visual context could be fed to a language model to give a system genuine awareness of its surroundings. That's the direction computer vision is heading in more complex robotics work, and it's exactly what ORION's Phase 4 is designed to explore. This project was an early, modest step in that direction — at the time I built it, a fully capable local robot felt far out of reach. The learnings are still valuable for when that phase arrives.

How It Works

-

Detection

OpenCV Haar Cascades — A pre-trained Haar cascade classifier detects face regions in the input image, returning bounding box coordinates for each detected face.

-

Crop

Face Extraction — Each detected face region is cropped, resized to the model's expected input dimensions, and normalised before being passed to the classifier.

-

Classify

Keras CNN — A CNN using transfer learning from ImageNet weights classifies each face as masked or unmasked, returning a probability score. The two-stage pipeline design — classical detection feeding a learned classifier — was my own architectural decision, informed by literature I was reading at the time.

-

Output

Annotated Visualisation — The original image is returned with colour-coded bounding boxes and confidence scores labelled for each detected face.

Companion Project

A companion project — CNN & Transfer Learning Comparison — benchmarks two CNN architectures against each other on the same task: one trained from scratch, one using InceptionResNetV2 transfer learning. The transfer learning approach outperformed the scratch-trained model, which was in line with what the literature at the time suggested. Useful confirmation, even if not a surprise.